DevSecOps and Architectures

Cyber, SecOps & Architectures

DevSecOps and Architectures in Brief

DevOps is filled with promises of new and effective groundbreaking ways of working. A few things has to be considered though; to get all the benefits the organisation has to be ready for the new model, there has to be a good understanding about the division of responsibilities, and last but not least, the security implications brought by automation has to be considered. The DevOps model can have different kinds of controls built in on both the dev- as well as the ops-side of things. If you want to create a lasting impression on your level of security, be a master of the dev-side.

DevOps defines the processes and practices from the planning stage of software and service development into production and operation, utilizing continuous integration methods for automated testing, quality assurance, and deployment – all popular methods in software development.

DevSecOps contains a lot of DevOps ideas and elements, focusing primarily on people and processes and only secondarily on tools. In this way, the introduction, management and coverage of information security development will be improved and automated. DevSecOps is strongly associated with the concept of data secure operation and infrastructure.

What Mint Security Delivers

In co-operation with the customer, we map out the needs for a secure, continuous integration. We implement the measures agreed with the customer’s experts and document everything.

We help the customer to see the pitfalls in the operating methods and processes, and we bring our own view and experience on how things should be done.

Our experts give hands-on help throughout the process, from plan to implementation and everyday operation. In addition to the groundwork, we also provide our customers with the ability to operate independently in a modern environment. In addition to technology, data security and risk management are constantly present in the operations.

Customer Needs and Challenges to Be Solved

Often the processes that have been put into operation will remain in use without later validation, as the day to day activities leave little time for development of the operations. Also, documentation may be scarce and the staff may have changed. This often leads to the fact that, in the end, operations are no longer efficient, and it may no longer be possible to find the answer to questions like “why do we do this”, when nobody really knows. It’s the way things have been done for years.

Modern architectures, new requirements for efficiency, and new technologies rushing into the server rooms may be surprising, stressful and depressing. However, things can be solved.

More Details about Our Methods and Tools

We use well-known and proven methods and tools in DevSecOps process design and implementation. It is not necessary to invent everything again, but often we can at least improve the existing tools and their efficiency.

Planning Safe Architecture and Design Review – Enterprise Architecture

Every safe solution is based on EA – enterprise architecture. The EA work stage may include, but is not limited to, the following:

- Top-level description of information systems

- Identifying technical and other constraints

- Data flow modelling

- Security requirements

- Continuity requirements

With our experience, we are able to evaluate what is essential from the point of view of the business needs and how well EA has been integrated into the company’s operations. The EA work can be purely auditory, design review work, or even compilation of a current obsolete “architectural assortment” into a new modern manageable entity. Automation is always part of modern EA.

Authentication, Authorization, Audit

Authentication, Authorization, Audit or AAA. In the development of information security, the cornerstone is to know who possesses the right to do and who has done:

- What?

- When?

- Why?

AAA tells us why certain things have been done, by whom and when. When we know what has happened, we can follow the tracks, for example, when problems occur. We do not have to base the decisions to launch repair measures on guesses – we can rely on facts.

As far as authentication is concerned, we always avoid local identification. By default, each local username is a security risk, since its lifecycle is often not followed up at all. The benefits of centralized authentication cannot be denied. We are able to manage validity, passwords, password policies, etc. from one location that provides us with a centralized view of the whole system. If we can manage authorizations through this same system, we are already close to an entity that is manageable.

For Authentication and Authorization we use, for example, the FreeIPA software that enables centralized user and access control, as well as the ability to utilize functionality in server operating systems and applications. FreeIPA includes a directory, which means that integrating various devices, appliances and systems software into the AAA is both meaningful and easy.

Logs, Logs, Logs

We map and build customer-specific views with the selected log management system to meet customer needs, and we automate measures to detect deviations. We use Splunk a lot, but we do not look down on other system.

We collect log data as comprehensively as possible and always move it out of the log system. This will prevent the log data from being tampered with, and it makes it indisputable.

We strive to assess the log observations automatically in the log management system and integrate the observations into e.g. JIRA or another management system, so that the handling of the observations can be done smoothly as part of the established process.

Automation and the Four-Eye Rule

Man is virtually always the weakest link. Man is prone to mistakes, and sooner or later mistakes will occur. It is inevitable. Increasing the number of separate manual work phases is not a viable solution in modern organizations – the workload is heavy as it is.

Errors can be effectively prevented by automation, meaning that all installations, repairs, modifications, and other operative actions are done centrally, for example, using Puppet. This avoids typing errors, misconceptions, etc., but the rules and policies used in automation are, of course, man-made. For this reason, we always use the four-eye rule: all changes must be accepted by another pair of eyes.

Hardening

Any system off the shelf will be vulnerable. System hardening is part of good IT practices. However, hardening procedures are often department specific, and especially if you buy turnkey solutions, this part will not be included. By hardening information systems, a significantly higher level of security will be achieved. These are basic security building blocks, things that have to be done correctly.

Unnecessary software is often installed on server systems. All unnecessary software is an interface for attackers, especially if it is listening to external connections. Also, fully local software provides an extra attack opportunity for local users.

When servers are hardened we also:

- Identify the services and software that are truly necessary

- Remove any extra software

- Change default passwords

- Identify connection needs and allow inbound connections only from the necessary sources

- Activate a local firewall and prevent any unnecessary services

- Activate two-factor identification (e.g. SSH public key) whenever it is possible

- The password policies are reviewed and refined

Hardening is carried out according to the manufacturer’s instructions, CIS guidelines as well as our own hardening standards. Most security standards require that systems be hardened before deployment.

IDS

Intrusion Detection System (IDS) detect unexpected changes in the system being monitored, such as:

- New services are activated

- Configuration files are edited

- The log file is reduced

We strive to integrate the IDS observations into e.g. JIRA or another management system, so that the handling of the observations can be done smoothly as part of the established process.

Security Scans and Automation

If your system is accessible, it’s threatened. If your system is accessible from the internet, it is pretty much game over already. Especially unhardened systems, where the level of security has not been measured, are of high risk. Automated security scans can provide very good insight. Using the findings, one can assist system development and measure SLA-levels.

We strive to integrate results of the scans into e.g. JIRA or another management system, so that the handling of the observations can be done smoothly as part of the established process.

Documentation

No created process is sustainable if the operating methods are not agreed upon and if issues are not clearly documented. Someone may know all DevSecOps processes by heart, but the staff changes, so it’s vital that the operation can continue even after the release of potential key personnel.

We document everything on, for example, Confluence by Atlassian. In this way, we get a centralized document library with change history, and we are also able to answer documentation specific questions presented by AAA. Always.

DevSecOps improves collaboration between different stakeholders and clarifies roles. The likelihood of errors in production services can be minimized by managed processes that also make each actors unique task clear.

We manage OS level configurations using Ansible.

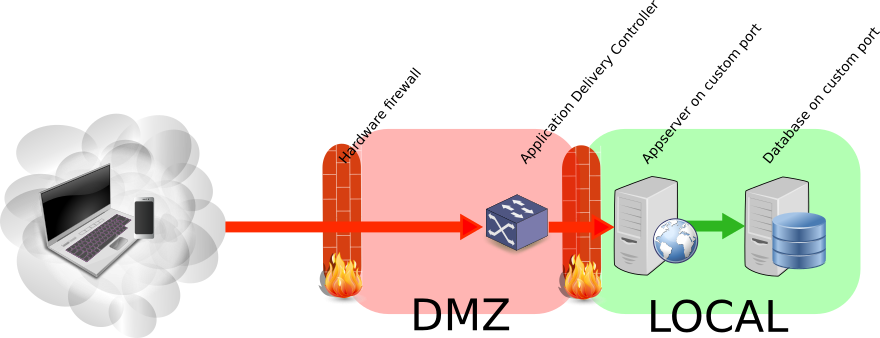

A few good technical practices when publishing services on the internet

This post is not a technical silver bullet. Nor is it the absolute truth. As a starting point though it is good. It adheres to common sense, is based on risk analysis – and provides good value for the effort.

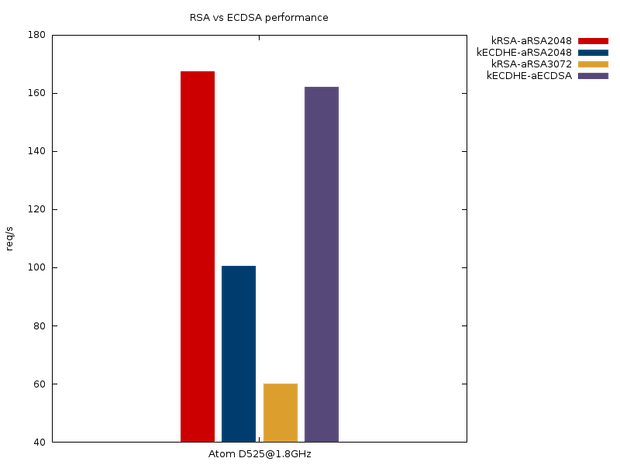

A technical rant about the different E’s in SSL/TLS

The main points in this post are about the different E’s (elliptic and ephemeral) as well as EC in the key exchange and the certificate signature algorithms.